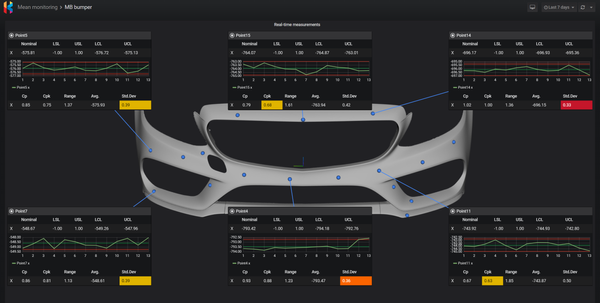

Automate measurement collection using free tools

Manually moving and parsing measurement data can be a nightmare for everyone involved. Getting someone to write a custom app to do it for you could bring another set of problems.

In this guide, you will learn how to set up your own automated measurement collection workflow using free, proven, and powerful tools. The best part is that you don’t need to be a software developer to do this!

Here is our high-level process:

- Measure part in GOM Inspect

- Export XML with measurements to a network drive

- Node-RED monitors shared folder and kicks off the data load flow

- Read and parse XML

- Save parts, features, characteristics and measurements to SQL database

- Move XML file to archive folder

Download and install:

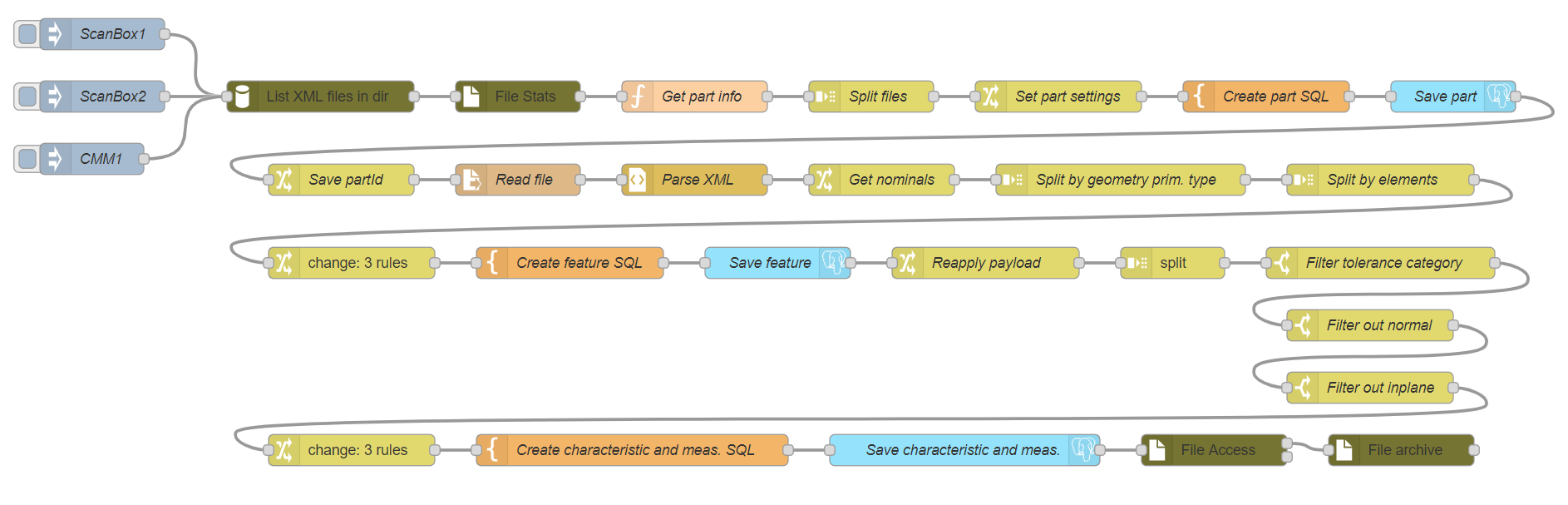

Before we go into configuration, let me introduce you to the tool that will enable us to do all this – Node-RED.

What is Node-RED?

Originally designed and built by IBM, Node-RED is a free, open source, flow-based development tool for wiring together hardware devices, API’s, databases, and online services as part of the Internet of Things. Users interact with Node-RED via a browser-based flow editor. Each flow consists of a set of linked nodes that have a well-defined purpose.

With visual representation of a computational problem, anyone can look at each step in a flow and get a sense of what it is happening without reading any code.

How is Node-RED used?

Node-RED is used in home automation, building control, PLC devices to ETL (Extract, Transform, Load) and BPM (Business Process Management) workloads. Maybe you’re already using it to collect in-line measurements.

Who is using it?

Check out nodered.org for some examples. It is tremendously popular in the IoT space, but it is also used in robotic process automation, manufacturing, and even software development. For those who need enterprise support, Siemens offers Node-RED as part of its PLM software package.

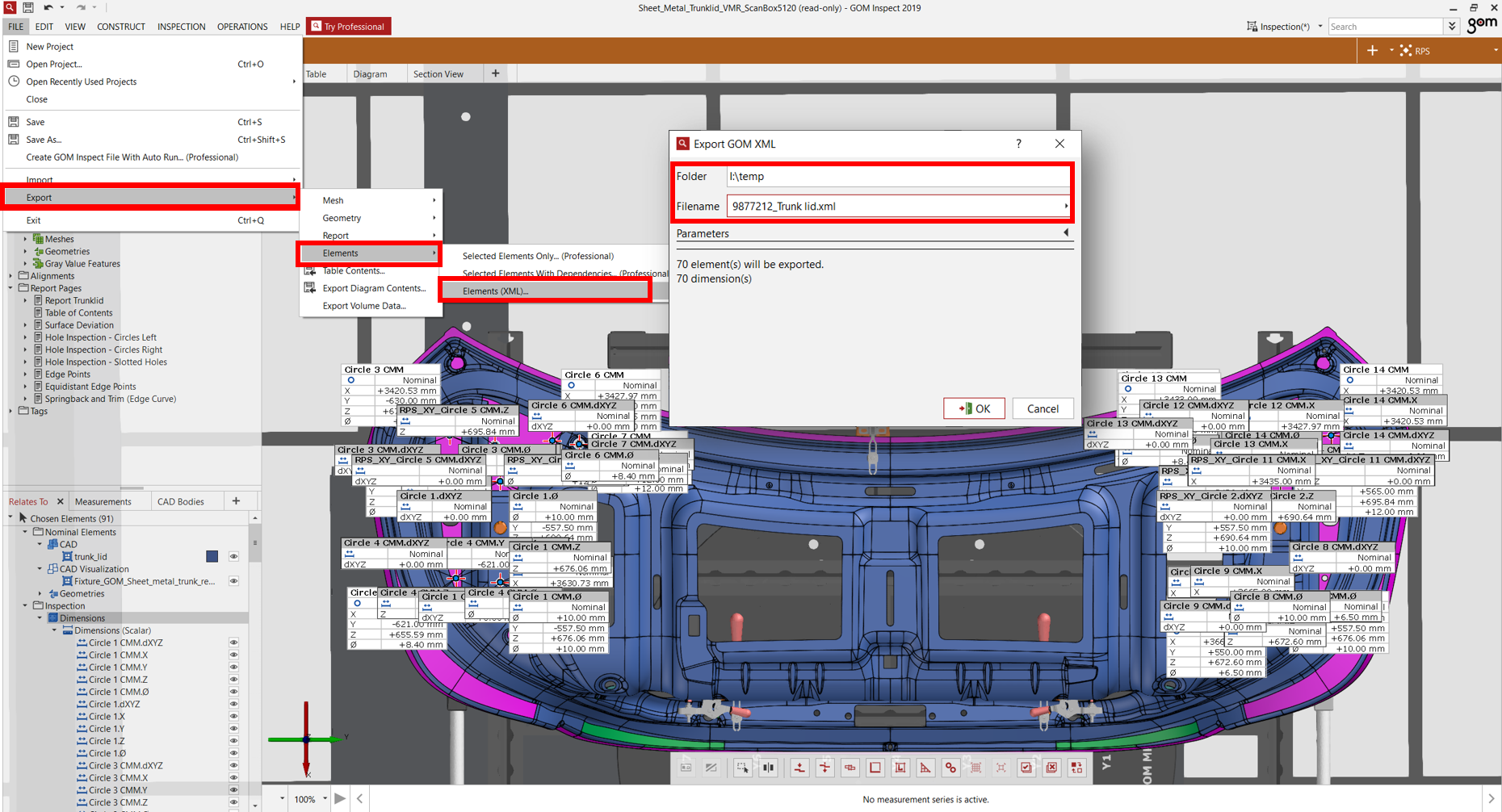

GOM Inspect

No special configuration needed. The free version won’t let you automate XML export, so this step must be manual unless you have a paid version.

Open your project, select elements you want to export, then

FILE -> Export -> Elements -> Elements (XML).

Select folder and type in name of your file.

To capture part information, name your file in specific format: partNumber_partName.xml or partName.xml. i.e. 9877212_Trunk lid.xml

Database

We need to have tables to store part, feature, characteristic, and measurements. If you already have your own database structure, skip this section and just modify queries in orange template nodes accordingly.

Create database then run following scripts to create tables used in this flow.

CREATE TABLE parts

(

id int NOT NULL GENERATED ALWAYS AS IDENTITY ( INCREMENT 1 START 1 ),

number varchar(40) NOT NULL,

name varchar(80),

description varchar(250),

CONSTRAINT parts_pkey PRIMARY KEY (id),

CONSTRAINT parts_number_key UNIQUE (number)

)

CREATE TABLE features

(

id int NOT NULL GENERATED ALWAYS AS IDENTITY ( INCREMENT 1 START 1 ),

partid int NOT NULL,

name varchar(40) NOT NULL,

description varchar(250),

type varchar(40),

CONSTRAINT features_pkey PRIMARY KEY (id),

CONSTRAINT features_partid_name_key UNIQUE (partid, name),

CONSTRAINT features_partid_fkey FOREIGN KEY (partid)

REFERENCES parts (id) MATCH SIMPLE

ON UPDATE NO ACTION

ON DELETE NO ACTION

NOT VALID

)

CREATE TABLE characteristics

(

id int NOT NULL GENERATED ALWAYS AS IDENTITY ( INCREMENT 1 START 1 ),

featureid int NOT NULL,

name varchar(40) NOT NULL,

nominal float NOT NULL,

usl float,

lsl float,

description varchar(250),

CONSTRAINT characteristics_pkey PRIMARY KEY (id),

CONSTRAINT characteristics_name_featureid_key UNIQUE (name, featureid),

CONSTRAINT characteristics_featureid_fkey FOREIGN KEY (featureid)

REFERENCES features (id) MATCH SIMPLE

ON UPDATE NO ACTION

ON DELETE NO ACTION

NOT VALID

)

CREATE TABLE measurements

(

id int NOT NULL GENERATED ALWAYS AS IDENTITY ( INCREMENT 1 START 1 ),

characteristicid int NOT NULL,

value float,

timestamp timestamp with time zone,

CONSTRAINT measurements_pkey PRIMARY KEY (id),

CONSTRAINT measurements_characteristicid_timestamp_key UNIQUE (characteristicid, timestamp),

CONSTRAINT "measurements_characteristicsId_fkey" FOREIGN KEY (characteristicid)

REFERENCES characteristics (id) MATCH SIMPLE

ON UPDATE NO ACTION

ON DELETE NO ACTION

NOT VALID

)

Configure Node-RED

Node-RED comes with a most of the nodes we need. However, in order to work with databases, files and directories we have to install additional nodes from the NPM repository. Go to data folder in your Node-RED installation directory. If you running it on Docker, you need to execute interactive bash shell on the container and go to data folder.

docker exec -it mynodered /bin/bash

bash-5.0$ cd /dataInstall following nodes and restart your container.

npm install node-red-contrib-readdir

npm install node-red-contrib-fs-ops

npm install node-red-contrib-re-postgresTo use Microsoft SQL server, install mssql node:

npm install node-red-contrib-mssqlTo use Oracle, run:

npm install node-red-contrib-oracledb-modCopy flow

Go to Node-RED flow library and copy our flow. Next, switch to your Node-RED instance and go to main menu, import nodes:

After import, double click on blue Postgres node and enter your server information. Next, edit ScanBox1 and enter path to folder with xml files. Optionally, you can add a repeater to inject node so flow can execute on its own.

Click Publish and then execute the flow by pressing the button next to ScanBox1 node.

Run following queries and verify measurements were loaded correctly:

SELECT * FROM parts

SELECT * FROM features

SELECT * FROM characteristics

SELECT * FROM measurementsGeneral notes

File name should be in a specific format: partNumber_partName.xml or partName.xml i.e. 9877212_Trunk lid.xml

Measurement date is based on file modify date.

You could add a file watcher instead of an interval; however, depending on your operating system and where files are located, it might not be supported or not working correctly. Pulling is a safe bet.

Function node Get part numbers could be replaced with Change function with a complex expression function. However, it was much easier to just write simple JavaScript to parse part number and part name.

SQL code in the flow is written in a way that it won’t create duplicate parts, features, characteristics, and measurements, even if you reprocess the same file.

When running on Docker, remember to add your drive to resources so it can be bind mounted.

Final thoughts

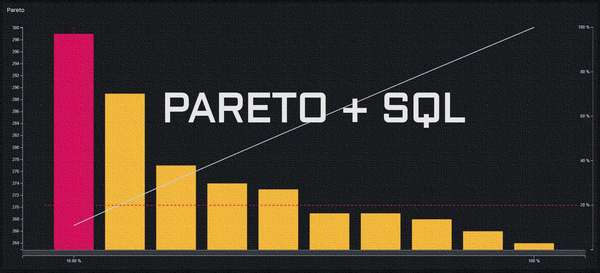

The flow described above should cover most of the base use-cases. We could insert log to database on each load and create email alerts when we don’t see any measurements loaded within specified time window. We could also add new flow to clean up the archive folder.

I hope this gave you an idea on how powerful Node-RED is and how it can be used not just for sensor reading but also automating measurement collection.